Soap and water do wonders for 90% of the restroom cleaning.

The problem is that the other 10% are important too.

Soap and water do wonders for 90% of the restroom cleaning.

The problem is that the other 10% are important too.

Not quite. I'm focusing on chatbots like Bard, ChatGPT and the likes, and their technology (LLM, or large language model).

At the core those LLMs work like this: they pick words, split them into "tokens", and then perform a few operations on those tokens, across multiple layers. But at the end of the day they still work with the words themselves, not with the meaning being encoded by those words.

What I want is an LLM that assigns multiple meanings for those words, and performs the operations above on the meaning itself. In other words the LLM would actually understand you, not just chain words.

Yup, that's the stuff. It's mostly a finishing touch, to get rid of bacteria.

At the very least, I'd recommend you:

Everyone has the cleaning agents that they swear upon, so look for something that works for you. For me it's

Important detail: do not mix any two of the cleaning agents that I've mentioned. Specially not ammonium and bleach.

For reference, the disinfecting agent that I use is called "pinho sol", but I have no idea if it's sold outside Brazil. You probably have some similar product wherever you live.

Complexity does not mean sophistication when it comes to AI and never has and to treat it as such is just a forceful way to make your ideas come true without putting in the real effort.

It's a bit off-topic, but what I really want is a language model that assigns semantic values to the tokens, and handles those values instead of directly working with the tokens themselves. That would be probably far less complex than current state-of-art LLMs, but way more sophisticated, and require far less data for "training".

creating a label and checking the skip invoice box

That works great too, specially if you want to use less foolproof filters. Or even a mix of both strategies.

Oh "great", more crap between Ctrl and Alt.

[Grumpy grandpa] In my times, the space row only had five keys! And we did more than those youngsters do with eight, now nine keys!

Thank you! It's working now.

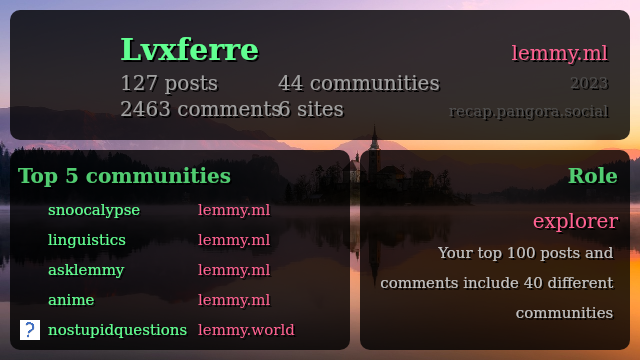

It's giving me an error, "Error Finding Entity // Make sure you spelled the entity correctly and that it exists!", when I use my username for lemmy.ml; curiously it works well when I do it for my beehaw.org account.

Create your account through old.reddit.com; when it asks you for an email, simply press "next". And, if you need an e-mail provider for some other reason, protonmail.com doesn't ask you for your phone number.

That said do you really need a reddit account?

[Note: this is my personal take, not Chomsky's]

We can recognise colours and things even without properly labelling them. (Colour example: I have no clue on how to call the colour of my cat's fur, but I'm fairly certain to remember thus recognise it.) However, it's hard to handle them logically this way.

And at least for me this is the main role of the internal monologue. It isn't just about repeating the state of the things, it's about connecting pieces of info together, as if I was explaining the link to another person.

Perhaps those without verbal internal monologue/dialogue have a more persistent innate language, that is not overwritten by common external language?

Possible; I don't know, really. It's also possible that the "innate language" doesn't really exist, only the innate ability to learn a language; but that ability is already enough to structure simple reasoning.

The source that I've linked mentions semantic embedding; so does further literature on the internet. However, the operations are still being performed with the vectors resulting from the tokens themselves, with said embedding playing a secondary role.

This is evident for example through excerpts like

Emphasis mine. A similar conclusion (that the LLM is still handling the tokens, not their meaning) can be reached by analysing the hallucinations that your typical LLM bot outputs, and asking why that hallu is there.

What I'm proposing is deeper than that. It's to use the input tokens (i.e. morphemes) only to retrieve the sememes (units of meaning; further info here) that they're conveying, then discard the tokens themselves, and perform the operations solely on the sememes. Then for the output you translate the sememes obtained by the transformer into morphemes=tokens again.

I believe that this would have two big benefits:

And it might be an additional layer, but the whole approach is considerably simpler than what's being done currently - pretending that the tokens themselves have some intrinsic value, then playing whack-a-mole with situations where the token and the contextually assigned value (by the human using the LLM) differ.

[This could even go deeper, handling a pragmatic layer beyond the tokens/morphemes and the units of meaning/sememes. It would be closer to what @njordomir@lemmy.world understood from my other comment, as it would then deal with the intent of the utterance.]